The ESUN Ethernet for Scale-Up AI initiative is now official. Announced at the OCP Global Summit, ESUN brings together chipmakers, switch vendors, hyperscalers, and model labs to make open, standards-based Ethernet viable for the scale-up part of AI clusters — the ultra-low-latency fabric that ties GPUs together inside a rack or pod. With backers that include Meta, Nvidia, AMD, Microsoft, OpenAI, Broadcom, Cisco, Marvell, Arista and others, the effort aims to turn Ethernet from a “good enough for scale-out” choice into a first-class option for GPU-to-GPU work where InfiniBand has dominated.

What ESUN actually is (and isn’t)

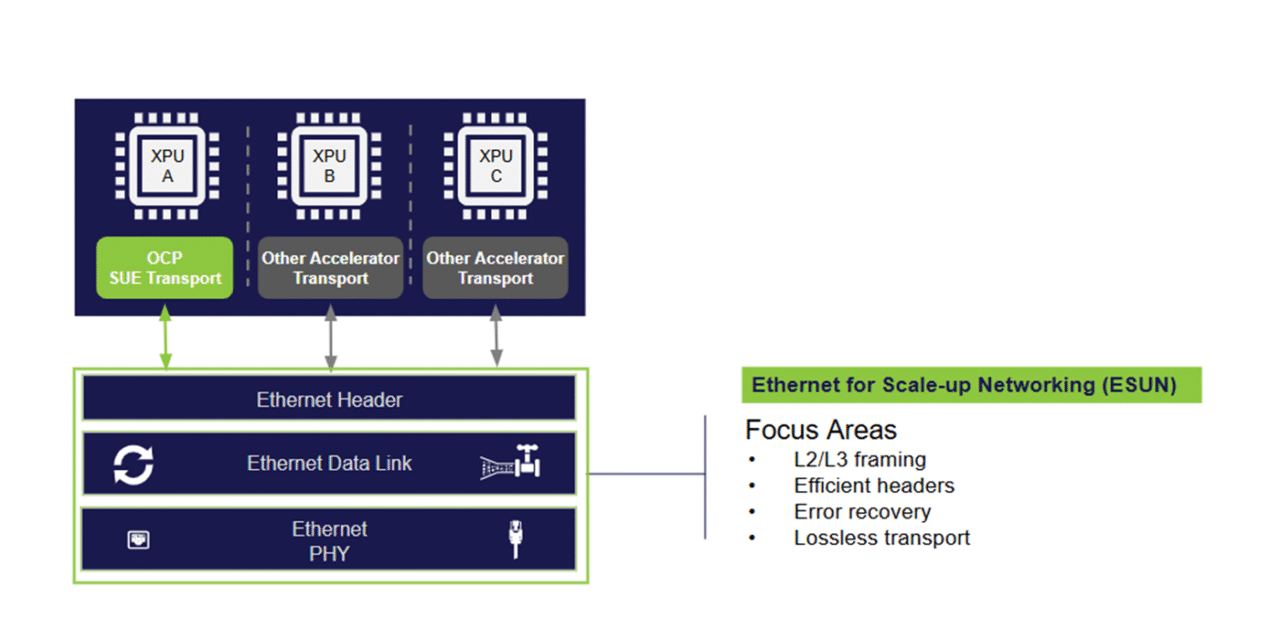

ESUN is an OCP workstream focused on the switching and framing pieces of Ethernet for scale-up networks: think loss-aware fabrics, traffic classes, congestion control, and interoperability across XPU NICs and Ethernet switch ASICs. The scope is deliberately tight. ESUN does not define application-layer protocols or host-side agent logic; it concentrates on the parts that move tensors quickly and predictably between accelerators. In practice, that means codifying behaviors vendors already prototype behind closed doors and publishing open specifications that multiple silicon families can implement.

Why “ESUN Ethernet for Scale-Up AI” matters now

AI training has outgrown simple topologies. Modern clusters hinge on two distinct planes: scale-up (fast, rack-local GPU meshes) and scale-out (region-wide interconnects). InfiniBand set the bar for scale-up with low latency and loss handling; Ethernet won scale-out with cost, tools, and talent. ESUN tries to unify those worlds by pushing Ethernet deeper into the rack without giving up the determinism model training needs. If it works, operators could standardize on Ethernet end-to-end, simplify tooling, and avoid vendor lock-in while still getting the performance that model developers demand.

Who’s at the table — and what each brings

The roster spans the full stack: GPU/CPU vendors, switch silicon makers, NIC teams, hyperscalers, and labs that actually run frontier training. That mix matters because performance is an ecosystem property. Switch ASIC features are only useful if NICs expose them, drivers use them, and schedulers understand the fabric’s real limits. With cloud operators and model builders sitting next to silicon teams, ESUN can turn best-effort Ethernet into engineered Ethernet: clear traffic classes, precise ECN/RED behavior, and fabric-wide telemetry that survives real jobs, not just microbenchmarks.

ESUN vs. InfiniBand (and where UEC fits)

ESUN isn’t a “rip and replace” campaign. It acknowledges that InfiniBand still leads on some scale-up metrics today, but argues that Ethernet can close the gap using open, reproducible mechanisms. ESUN’s work is also expected to align with broader Ethernet efforts (like the Ultra Ethernet Consortium) so operators aren’t betting on a dead-end fork. In short: ESUN targets rack-local, GPU-dense networks; UEC and friends cover the wider Ethernet roadmap; and operators can choose the mix that fits each cluster generation.

What to watch for in the next 6–12 months

The near-term test is credible, public interop across at least two switch ASICs and two NIC families, under workloads that look like actual training runs (not just ping-pong). Watch for open test plans, reference configs, and packet-level telemetry that show how the fabric behaves under bursty collective ops. Also expect vendors to ship ESUN-aligned features in upcoming switches and adapters, plus tooling that exposes queue health, congestion marks, and flow fairness at the pod level. If early results hold, hyperscalers will start dual-tracking: InfiniBand for legacy racks, Ethernet for new pods.

Risks and realities

Ethernet’s strength — diverse vendors and a huge install base — is also a risk. If implementations drift, operators get paper standards and real headaches. ESUN’s best shot is tight, testable specs and public plugfests that catch differences early. Another reality: some features may require new silicon spins, so availability timelines will be uneven. Finally, success isn’t just latency; it’s predictability. Model teams need runs that finish on time, every time, not just pretty microsecond numbers.

Bottom line

The ESUN Ethernet for Scale-Up AI effort is the clearest push yet to make Ethernet a first-class citizen inside AI racks, not only between them. With a heavyweight coalition and an open process under OCP, ESUN has the right ingredients. If the group can turn specs into shipping, interoperable gear, operators get simpler networks, richer tooling, and a path away from lock-in — without sacrificing the performance that frontier training demands.