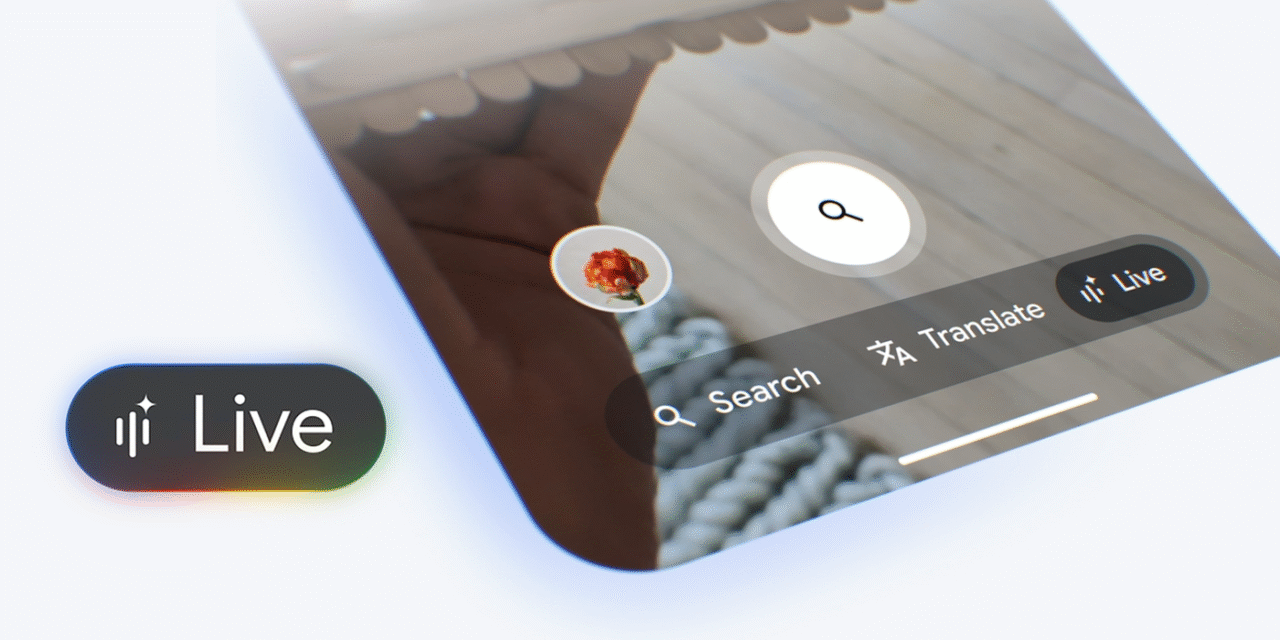

Google has begun rolling out Search Live inside AI Mode, a real-time, conversational way to search that can use your voice and, when you choose, your camera. It’s designed for moments when typing is clumsy and context matters—fixing something, shopping on the go, or simply understanding what you’re looking at. Below is a premium, clean version of the article—no in-text bubbles—structured for fast reading and easy publishing.

What is Search Live?

Search Live is a multimodal search experience that lets you talk to Google, show it what you’re seeing, and get answers that evolve as the situation changes. Instead of issuing a single query and sifting through links, you hold a conversation: ask a question, add visual context, and refine the result with natural follow-ups. The key shift isn’t just speed—it’s context persistence, so the system remembers what it’s “looking at” and adjusts guidance without making you start over.

Timeline & availability

Google previewed the idea after I/O and opened early access to testers over the summer, then launched broadly in the United States (English) in late September 2025. A companion how-to from Google detailed entry points and controls, clarifying that Search Live lives inside the AI Mode experience in the Google app. Near the end of September, Google also pushed a visual upgrade to AI Mode that makes many Live scenarios—especially inspiration and shopping—feel richer and easier to scan.

How to use it (step by step)

Open the Google app on Android or iOS, tap into AI Mode, and choose Live to start a session. You can speak naturally—“What’s going on with this?”—and, if it helps, enable the camera stream so Search can see the object, device, or scene in front of you. Keep the conversation going with clarifying questions like “show me the exact step,” “is there a cheaper alternative,” or “make it beginner-friendly,” and Live will refine the response in place.

What can you do with Live? Practical scenarios

For fix-it tasks, point your camera at the problem—say, a 3D printer extruder clicking—and ask what to check; Live can guide you through diagnostics while you keep filming. For shopping and discovery, describe the vibe or constraint—“compact, quiet mechanical keyboard under $120”—and Live returns visual suggestions that you can narrow with brand, price, or feature follow-ups. For understanding the world, use it like a rolling explainer: scan a label, a component, or a menu, and ask what it means, how to use it, or what to pick instead.

How it differs from Voice Search, Lens, and AI Overviews

Classic Voice Search is a one-shot query with a spoken answer, and Lens identifies things from a still or a frame. AI Overviews summarize web information above the traditional results. Search Live blends these modes: it’s continuous and multimodal, accepting both audio and live video, while keeping context across turns so you don’t have to rephrase or reload. This makes it especially strong for tasks that benefit from back-and-forth, not just a single reply.

The late-September visual upgrade (why it matters)

Alongside the rollout, AI Mode received a more visual presentation for inspiration and shopping queries. Results now lean on image collages, tappable product tiles, and richer descriptions that update as you refine your ask. For users, this shortens the path from “I’m not sure what I want” to “that’s exactly it,” and for publishers and brands, it raises the importance of high-quality images and structured product data.

Privacy, controls, and guardrails

You control when the camera is on, and you can stop a Live session at any time; the experience follows Google’s standard Search policies and safety systems. Sensitive or regulated topics may yield limited assistance or steer you toward authoritative resources rather than step-by-step guidance. In practice, Live is optimized for practical, everyday tasks where visual context improves accuracy, not for medical, legal, or other specialist advice.

Limitations and pro tips

At launch, the broad rollout is US-English, with additional regions and languages expected to follow Google’s staged approach. Performance depends on network quality and your device’s ability to stream stable video; if latency creeps in, switch briefly to voice-only or freeze a useful frame like you would in Lens. For site owners, the visual emphasis means image quality, alt text, and structured data (products, ratings, availability) matter more than ever if you want to surface well inside AI Mode and Live.

Bottom line

Search Live is a meaningful step toward agentic, multimodal search: fast, conversational, and context-aware. It shines when the answer depends on what you’re looking at or when a single query can’t capture the nuance of your task. If you bounced off earlier experiments, try Live now—the flow is smoother, results are more visual, and it genuinely cuts friction in the moments when you need help right now.